Go user-friendly or go home

Working at BSC

Barcelona, post-training week. To my colleagues who have flown to other SoHPC destinations, I would like to report that the city is alive and well, hot and welcoming as ever, and that thankfully the AC in our office is working full-on.

At the Computer Applications department of the Barcelona Supercomputing Center, a varied team of scientists, developers and artists are working on scientific simulations and the tools that make them possible.

As part of the Scientific Visualization Group for the summer, I’m building an interesting and unexpected set of skills besides programming, ranging from a bit of colour theory and the psychology behind UI dashboards, to the underground subtleties of the art of sketching without being choked by your lack of ability to draw.

A lot of thought and know-how has been put into the project even before I started working on it: I went into the project with a base of ideas that would have never occurred to me, such as splitting data into 3D and 2D fragments for better visualisation, or using colour to represent connection intensity.

Our work process involves developing fast functionality prototypes followed by a critical review of usability. We have been working closely with some of the frequent users of Alya to create a tool that best suits their needs. For the following week, we are planning a thorough test of different interface versions using an eye-tracking camera. This has been described by a programmer friend of mine as “above and beyond the call of duty”.

Working in a team who’s enthusiastic about developing this interface and developing it well makes me rediscover my motivation daily. Our office deserves to be commended for its diversity, its interdisciplinary cooperation, its friendly atmosphere, and of course, for the coffee machine which grinds beans on the spot.

The project: Some background

Alya is a simulation system designed for large-scale research computations to be run on supercomputers. Initially used for computational mechanics simulations, it has expanded to other projects, including a large-scale simulator of the human heart. With the help of computational resources that have never before been available, researchers are able to better understand the inner workings of the human body at a level of detail much finer than was possible in the past. The work on Alya continues into other anatomical systems, to build a highly accurate model of the human body, which helps medical doctors diagnose pathologies, plan operations and test new drugs.

Developed at the Barcelona Supercomputing Center (BSC), Alya is used for several active projects to run simulations on Marenostrum, Spain’s most powerful supercomputer. A supercomputer is really a room full of computers connected together through a fast network. Thus, running computations, or jobs, involves a complex partitioning of the problem into smaller subproblems that can each be computed by one machine. Arriving at the final result requires tight coordination between these.

This process creates many difficult problems in achieving correctness and efficiency. One of these is that a mistake at the beginning of the computation may go unnoticed until the end, when results are aggregated – resulting in wasteful computation. As resources on a supercomputer are expensive, in terms of both the energy used and the support necessary, effort is often put into minimising their usage. Of course, researchers can also spend their time more fruitfully than by waiting for results.

The project: So far

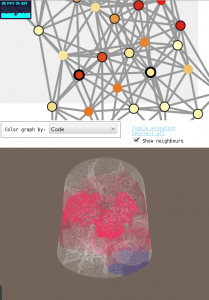

On this internship I am building a user interface which aims to help researchers manage their running jobs, evaluate the seriousness of any issues, and monitor performance metrics. It is based on BSC’s real-time monitoring tool which finds potential errors in jobs running on an HPC cluster. My piece of the project will hopefully allow researchers to diagnose errors by interacting with visual representations of both the simulation problem (as a 3D rendering) and the connectivity between computing nodes (as a graph) on which it is running.

The current prototype reads some data about the problem at hand and produces a 3d representation of the object that’s being simulated, as well as a 2d graph illustrating the communication between separate machines. So far, interaction with the 3d model has been added to allow rotation and zooming, as well as, selection of a single or several regions of the simulated object with a representation of its connectivity with other regions.

Most recently, I’ve been working on adding “events” that represent potential problems in the computation. These will be presented with their location on the simulation model, diagnostic information and visual aids like charts, images, and videos. As the project progresses, we have been working on developing and testing different UI designs to best represent this information. I am looking forward to having a fully working prototype of the events system, and of course, playing with the eye-tracking equipment at BSC.