Task-based models on steroids: Accelerating event driven task-based programming with GASNet

Project reference: 1909

One of the grand challenges of HPC is providing programming tools that enable the programmer to write parallel codes capable of scaling to hundreds of thousands, or even millions, of cores. Task-based programming models are seen as one possible solution to this. In this approach, problems are split up into lots of separate tasks and then run when a set of dependencies (such as other tasks finish running) are met. This idea is of great interest to HPC in general because it supports applications with irregular and complex communication patterns, along with allowing programmers of more traditional codes to break apart the synchronous nature of their communication which is often a major limiting factor when it comes to performance and scaling.

However, there is a problem! All current task-based models are based on specific assumptions that limit their utility. For instance, a large number of task-based models express dependencies via variables, which is fine in a single memory space but when we go across to multiple nodes (which you are forced to do to run large problems) then this suddenly isn’t directly applicable and solutions for solving it can make the application more complex and/or have a significant performance impact.

To this end, we developed the Event Driven Asynchronous Tasks (EDAT) model, where the programmer is explicitly aware of the distributed nature of their code but still splits their code up into tasks and drives the interaction between these via events. This can provide significant performance benefits for real-world applications running at large scale (up to 32768 cores.)

Up until this point we have just used the Message Passing Interface, MPI, under the hood as the transport layer for EDAT. However, GASNet, a technology designed to handle the communication for runtimes like EDAT, is potentially a much better fit and also operates much more closely to the hardware level. Therefore a key question, and what this project will be looking to answer, is the performance and scaling benefit that a GASNet transport layer for EDAT will provide to the existing benchmarks that we have used. One example of this is that GASNet should be able to issue hardware interrupts which EDAT can then pick up, rather than requiring a polling thread. This addition of GASNet will be transparent to the end user, but has the potential to be a game changer for the EDAT library and further illustrate the important benefits of this approach.

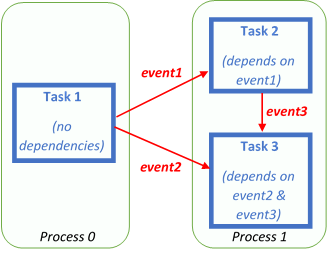

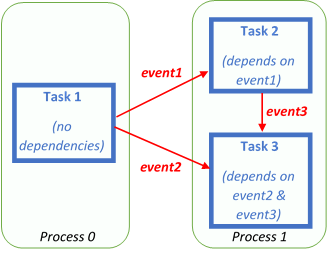

An illustration of how EDAT works, where the programmer has two processes. Process 0 schedules one task (task 1) and process 1 schedules two tasks (task 2 and 3.) Task 1 has no dependencies, so runs immediately whereas tasks 2 and 3 depend on events and won’t run until these arrive. Task 1 fires an event to process 1 and this matches the dependency of task 2, task 1 then fires another event to process 1 and at the same time task 2 fires an event to itself. These two events match the dependencies of task 3 and this then runs on a core

Project Mentor: Nick Brown

Project Co-mentor: /

Site Co-ordinator: Ben Morse

Participant: Benjamin Huth

Learning Outcomes:

This project involves tackling one of the grand challenges of HPC – how to most effectively write highly scalable parallel codes. You will be running on state of the art Cray supercomputers, working with and learning about task-based models. You will also gain exposure to runtime technologies lower down the stack that supports parallel programming APIs. Lastly, you will gain experience in running codes on large scale parallel machines as well as aspects including threading and distributed memory programming too.

Student Prerequisites (compulsory):

Strong programming background and willingness to learn. Ideally, the student should know how to use C/C++ .

Student Prerequisites (desirable):

Experience with C/C++.

Training Materials:

The ARCHER training course materials (http://www.archer.ac.uk/training/past_courses.php) are a good resource. The EDAT library, with documentation and examples, is at https://github.com/EPCCed/edat and GASNet documentation is at http://gasnet.lbl.gov/

Workplan:

- Task 1: (1 week) – SoHPC training week

- Task 2: (2 weeks) –Gain experience with the existing code base. Agree on the exact scope of work and start preliminary implementation and experimentation towards this. Submit a work plan.

- Task 3: (3 weeks) – Main development phase of the project where the GASNet transport layer is finalized and benchmarking begun to compare performance against the existing MPI approach. Given time performance tuning will be performed.

- Task 4: (2 weeks) – Final tidy up of code and benchmarking experiments. Produce a video demonstration of the work that has been done. Submit final report

Final Product Description:

- The development of a transport layer using GASNet for handling the EDAT communications. This will likely be one or more code files

- Benchmarking results that compare the performance of the GASNet transport layer with that of the existing MPI layer for a number of benchmarks that we have already developed. This will then most likely form the basis for a paper exploring the performance in the context of writing task-based codes in this manner.

Adapting the Project: Increasing the Difficulty:

Whilst the main aim of this project will be to develop a GASNet transport layer and perform benchmark comparisons, there will be the opportunity for more advanced students to then address optimisation aspects of this (including next-generation GASNet-EX) and/or even implement additional transport mechanisms (such as Cray’s DMAPP) for performance comparison.

Adapting the Project: Decreasing the Difficulty:

The code is structured such that the specific transport layer inherits from a parent, generic, class. As such this should be entirely pluggable and the only modifications needed are writing a new child class. There is already an MPI implementation and this comprises of a number of distinct pieces of functionality. Hence if the student is struggling we can focus on one or two specific items and still use the existing MPI transport layer for the other parts.

Resources:

No major resources needed. The student will need to bring their own laptop running Linux. We will provide time on ARCHER, a Cray XC30, which we host & manage, for this project.

Organisation:

EPCC