How an USB Stick can make all difference

Hello World!

Since my last blog post quite some time has passed, and wherein a lot of progress was made on my project! After a very warm welcome by my supervisors and the other colleagues working at ICHEC I immediately started out setting up what would keep me occupied for the next couple of weeks, the Neural Compute Stick by Intel Movidius.

This stick was developed specifically for computer vision applications on the edge, with dedicated visual processing units at its disposal to inference tasks on deep neural networks. This last sentence was loaded with quite a lot of terminology, so I’ll go ahead and try to unentangle this a bit.

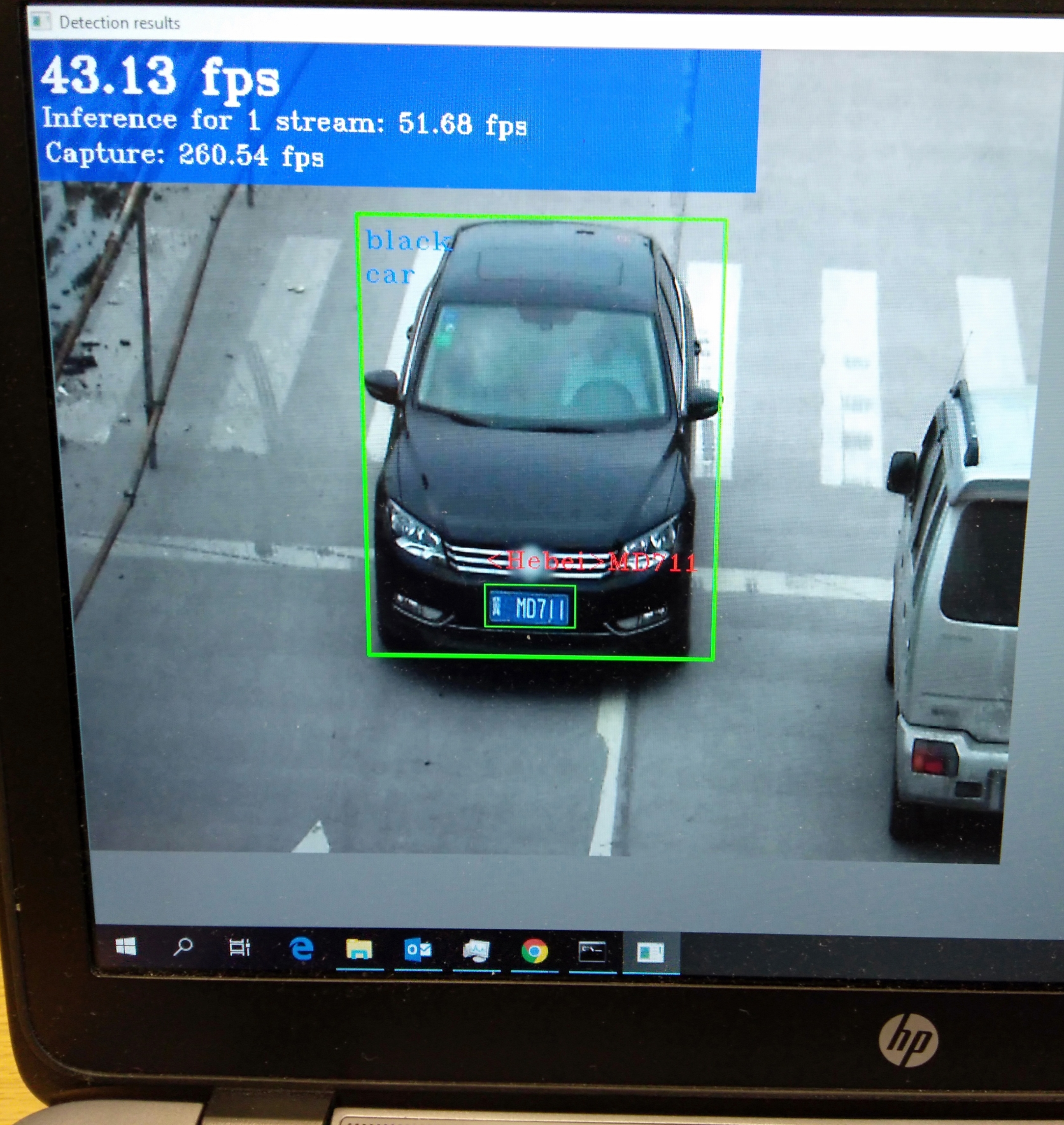

So, what does inference mean in this context? Once a deep neural network was trained on powerful hardware for hours or sometime days, it needs to be deployed somewhere in a production environment to run the task it was trained for. These can be for example computer vision applications, which can encompass detecting pedestrians or other objects in images or also classifying objects in detected images such as different road signs. Simply put, inference using a trained model at hand refers to the task of making use of the model and getting predictions depending on the inputs def into the model. This can happen on a server in a data center, an on-premise computer or an edge device. Now the crux with the latter is, that applications such as computer vision tend to have quite a high computational load on hardware, whereas edge devices tend to be lightweight and battery powered platforms. It is of course possible to circumvent this problem by streaming the data that an edge device captures to a data center and process it there. But the next issue that the term “edge device” already implies is, that these computing platforms are situated in quite inaccessible regions, like on an oil rig in the ocean or attached to a satellite in outer space. Transmitting data is in these cases costly and comes with a lot of latency, rendering real time decision making nearly impossible. Especially if this data encompasses images, which as a rule of thumb tend to be large. That’s where hardware accelerators like the Neural Compute Stick bring in a lot of value. Instead of sending images or video to some server for further analysis, the processing of data can happen on the device itself and only the result i.e. pure text is sent for storage or statistical and visualization purposes to some remote location. Such an approach brings numerous benefits, but most importantly alleviates latency and communication bandwidth related concerns.

Now what is this magical Neural Compute Stick all about?

The Intel Movidius Neural Compute Stick is a tiny fan device that can be used to deploy Artificial Neural Networks on the Edge. It is powered by an Intel Movidius Vision Processing Unit, which comes equipped with 12 so called “SHAVE” (Streaming Hybrid Architecture Vector Engine) processors. These can be thought of as a set of scalable independent accelerators, each with their own local memory, which allows for a high level of paralleled processing of input data.

Complementing this stick is the so called Open Visual Inferencing and Neural Network Optimization (OpenVino) toolkit, a software development kit which enables the development and deployment of applications on the Neural Compute Stick. This toolkit basically abstracts away the hardware that an application will run on and acts as an “mediator”.

Other than working on my project I already got a first taste of how it is living in Ireland and I find it nothing short of amazing! The weather has been very gentle, bringing way more sunshine than I expected, given all the clichés about Irish weather! Living centrally during my stay here also brings about the advantage, that exploring the city is fairly easy and the workplace is just a short walk away!

After this short introduction I will outline in my next blog the workflow and setup of the Neural Compute Stick and show demonstrate an example application, so stay tuned for more!