OMPI in ARM & closing thoughts

This post ends a series of reads related to ARM in the server space. If you are in the mood of some ARM-related tech, don’t forget to view the previous texts: ARMageddon: Really!!?? and ARM file I/O bechmarks and more.

OMPI under ARM

OMPI or Open Message Passing Interface is a library that guides the communication and the data flow when programming parallel computers, allowing the use of a cluster of computers to distribute the workload of a compatible program/algorithm. Right now, MPI (the standard) is the de facto programming model for the majority of high performance applications.

Everything was good until ARM wanted to join to the HPC party and requested its piece of the cake, and the following picture was the answer to that request:

Yeah, confusing, right? Well, this describes perfectly how OMPI works under ARM.

First, OMPI expects some Intel-like-flavored numbering style such as:

- Core 0 HW threads 0-3: [0-3]

- Core 1 HW threads 0-3: [4-7]

- Core 2 HW threads 0-3: [8-11] …

But, under the influence of ARM, the numbering style is as follows:

- Socket 0 HW thread 0: [0..31]

- Socket 0 HW thread 1: [32..63]

- Socket 0 HW thread 2: [64..95]

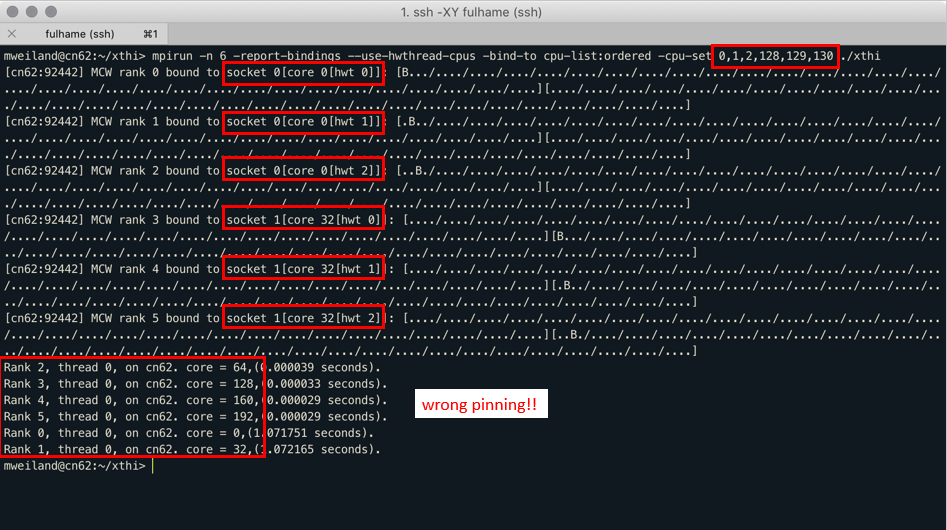

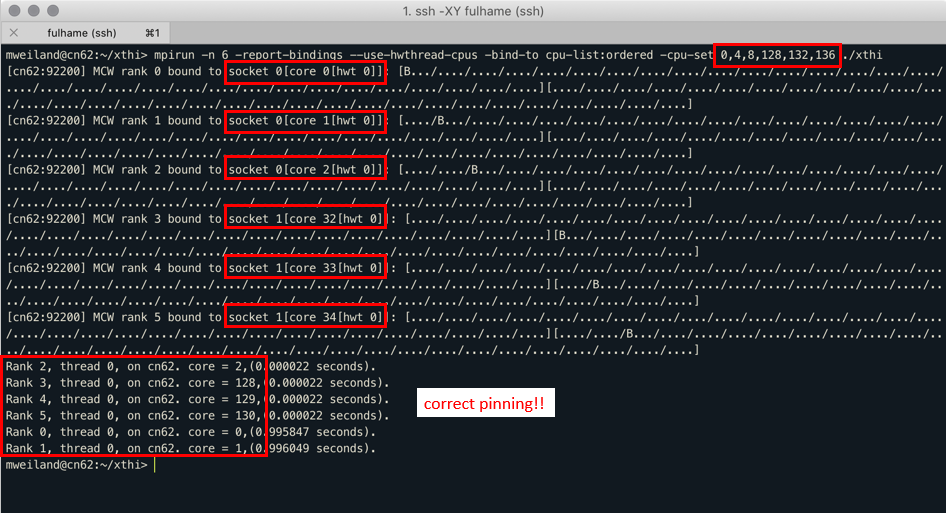

An example to demonstrate this problem are the following pictures, courtesy of Dr Michèle Weiland (EPCC) from the Catalyst meeting:

Due to this issue, the Fulhame cluster is running on SMT1 although it can run on SMT2 or SMT4, doubling or quadrupling its maximum theoretical performance®, or at least, the hardware thread count.

As you can wonder, yes, OMPI might need some tweaking to accept this nomenclature, but since I only had 2 weeks, studying the whole OMPI repository just to make this change happen wasn’t feasible.

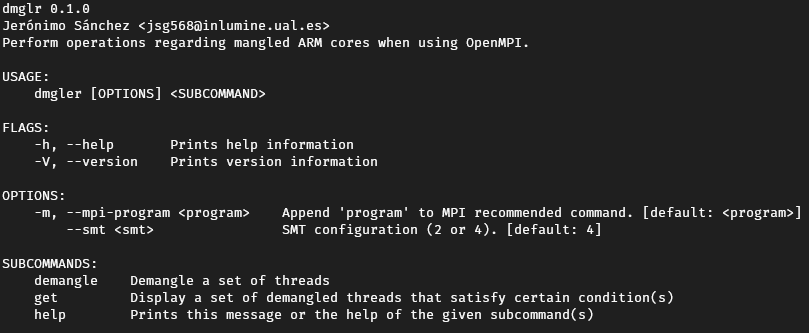

So, instead, I made a CLI tool, named dmglr or demangler, to sort of solve this issue.

It has various subcommands to demangle a set of given threads, or to get a set of them given some conditions.

The output of this CLI is a plain text line that contains a mpirun command with the correct or demangled input, just to execute on the cluster. This allows the researchers under the Fulhame facility to easily add the execution of the CLI on they slurm script and use the output to configure the node.

Closing thoughts about Summer of HPC

Before this global pandemic, I was in a summer internship at the IT department of a big company, sometimes feeling that I was bothering my peers and mentors, making them look after me or giving me made up jobs, etc. A sentiment of belonging.

When this opportunity came to me, I was still unsure about what to do during this summer (2020). When the pandemic arrived (luckily), the decision was not up to me anymore as the internship was cancelled, so the choice was crystal clear.

During this summer I have learnt about some of the problems that ARM is facing right now, just at the moment ARM has jumped from smart phones to PCs.

Aside of the technical specs of this programme, I really got along with my mentor and my colleague, although I still think that if the programme would have follow it initial plan, I could have made a friendship with my colleague and the rest of my peers of SummerHPC on EPCC.

I have nothing more to add than Summer of HPC is a really great experience both from a technical and a personal standpoint.

From the mpirun man page

-cpu-list, –cpu-list

Comma-delimited list of processor IDs to which to bind processes [default=NULL]. Processor IDs are interpreted as hwloc logical core IDs. Run the hwloc lstopo(1) command to see a list of available cores and their logical IDs.

I guess your app tells on which physical hwthread it is bound, but mpirun takes logical hwthreads on the command line.

Open MPI also provides user friendly ways to bind MPI tasks to (set of) resources (e.g. hwthread/core/numa/socket) so you should never have to use the –cpu-list option.