How I Learned to Stop Worrying and Love Accelerated Computing

In a normal summer, all of the Summer of HPC (SoHPC) interns would stay at one of the PRACE’s 26 national supercomputing member institutions. As, of course, this is not a normal summer, the SoHPC 2021 has been designed as remote program in this year. This means unfortunately, that experiencing the everyday life at a HPC institution, and working with like-minded HPC practitioners on site is not possible. However, on the upside, remote working also grants a large degree of freedom for both work and life. This is why I decided to think positively, seize the opportunity, and set out on a journey with my laptop, which led me to the PRACE site that is associated with my project: CINECA in Italy!

CINECA is a non-profit consortium comprising of more than 70 Italian universities and research institutions, and is located in Casalecchio di Reno, a small town close to Bologna.

There, I was welcomed by my CINECA supervisor, the HPC specialist Massimiliano Guarrasi. Once I obtained my visitor badge, we opened the “chamber of (HPC) secrets” and entered the machine room with a specialized guide. Setting foot in this room is exciting, but also dangerous. In case of an emergency, such as a fire, it is flooded with argon within only ten seconds. The warmth and noise inside the machine room was overwhelming and made me aware of the power of the supercomputers that we are working with. One of them is MARCONI100 (M100), named after the Italian radio pioneer Guglielmo Marconi.

How powerful is our supercomputer?

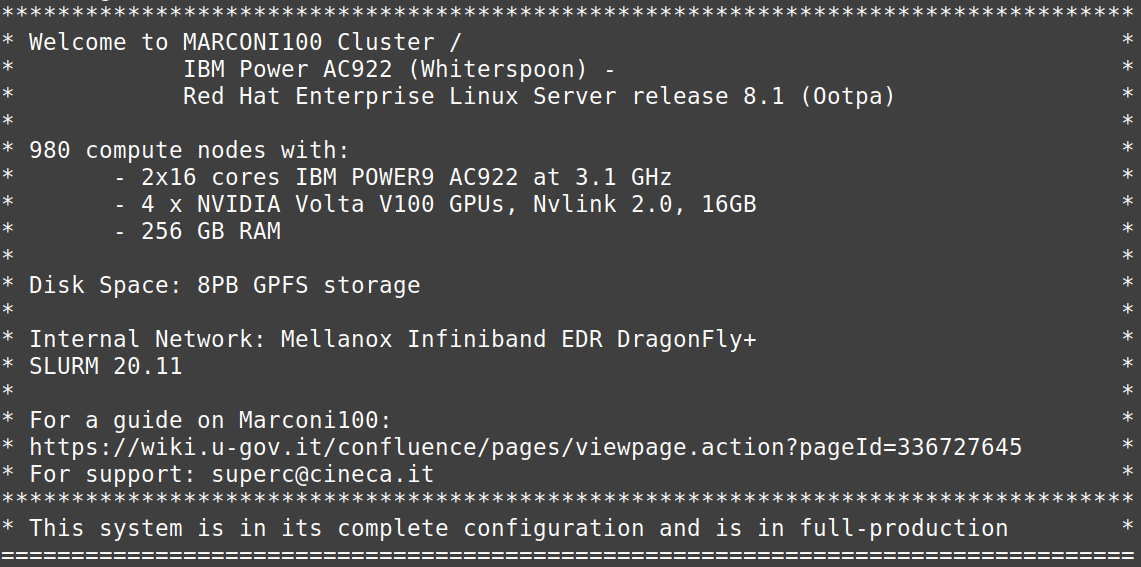

To get an idea of how powerful M100 is, I would like to show you some numbers. While a conventional desktop computer possesses 4 cores (computing units), M100 features a whopping 31360 cores, that is about 8000 times as many!

Importantly, M100 is a so-called accelerated system. That means it does not only rely on central processing units (CPUs), but also graphical processing units (GPUs). In an everyday context, you know GPUs for making possible video gaming with excellent, high-resolution graphics. In research and industry however, GPUs are utilized to execute certain parts of a program in parallel, which is typically much faster compared to conventional CPU computing. This means a significant advantage for applications such as molecular simulations, image processing, or deep learning. Using GPUs for these applications is also known as general purpose computation on GPUs (GPGPU). For GPUs by the company NVIDIA, this is frequently done using a programming technique known as CUDA.

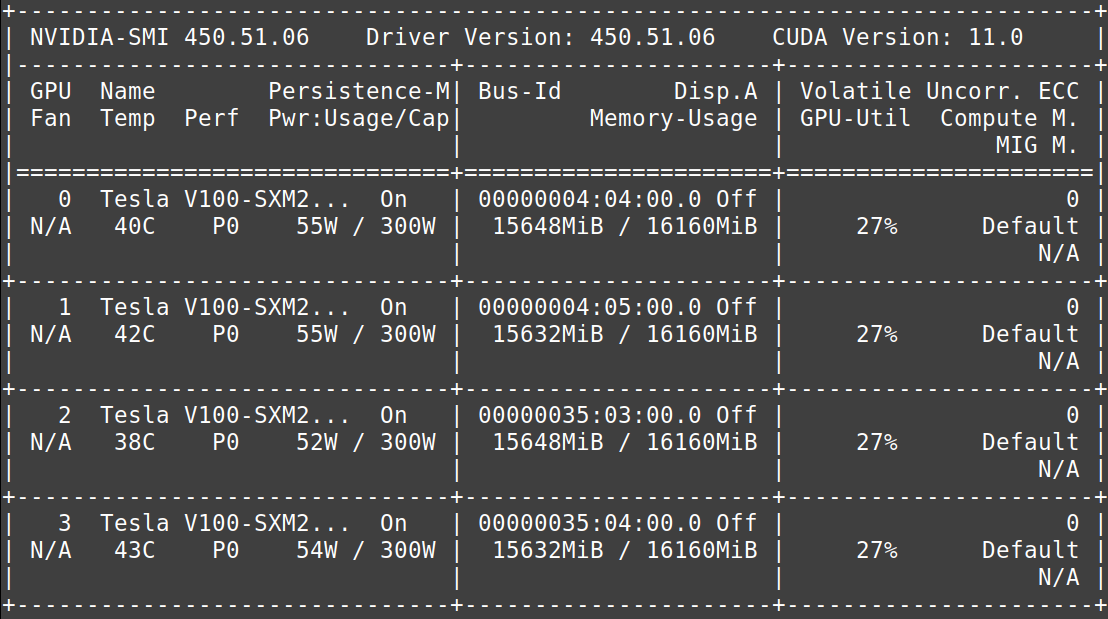

On the M100 supercomputer, we have access to NVIDIA Volta V100 accelerators, which have been claimed to train a neural network up to 32 times faster compared to a CPU! Together with the Tensorflow framework for GPUs that we are using to tackle our submarine classification problem, we can train our deep learning models much faster. Each node of the M100 supercomputer features four V100 GPUs, which me and Raska are using to train our artificial intelligence models in parallel. While I thought this to be quite complicated, using CUDA turned out to be not that difficult, and I soon started to love using the power of accelerated computing!

The race towards exascale computing

Similar to the moon race, there is also a prestigious race in supercomputing: the goal is to build the first exascale supercomputer. Exascale refers to the number of calculations per second (floating point operations per second, FLOPS) that a machine is able to perform, which is a truly gigantic number: 1 exaFLOPS per second means 1018 (1,000,000,000,000,000,000) operations per second, a number that is about as large as the (estimated) number of sand grains on earth. Computing on this scale would enable us to achieve even better predictions, for example regarding the discovery of novel materials. Even large-scale brain simulations could be feasible! CINECA is one of the institutions leading the race towards this new era with its pre-exascale Leonardo supercomputer, which is part of the European Joint Undertaking EuroHPC.

You might ask yourself how supercomputing and exascale computing impacts your life. This CINECA video holds the answer:

To sum up, working with GPU-accelerated high performance computing systems is both powerful and exciting. In the future, the number of accelerated supercomputers is likely to grow.

I am curious to learn about your experiences: have you ever utilized GPUs for your computations? Do you have an application, where you think an accelerated supercomputer could be useful? Do you have experiences with remote working? Please let me know in the comments!

Feel free to stay in touch with me on

LinkedIn | ResearchGate | Twitter

Super interesting!

Nice work!

I learnt useful things from the post. Keep up the good work

Thank you Sandeep, happy to hear that you liked my post!

Very interesting and well structured article!

Thanks Julian!

Really interesting topic!

Wow, I didn’t realise just how powerful supercomputers were!!